File Info

| Exam | Cloudera Certified Administrator for Apache Hadoop (CCAH) |

| Number | CCA-500 |

| File Name | Cloudera.CCA-500.CertDumps.2021-02-12.33q.vcex |

| Size | 27 KB |

| Posted | Feb 12, 2021 |

| Download | Cloudera.CCA-500.CertDumps.2021-02-12.33q.vcex |

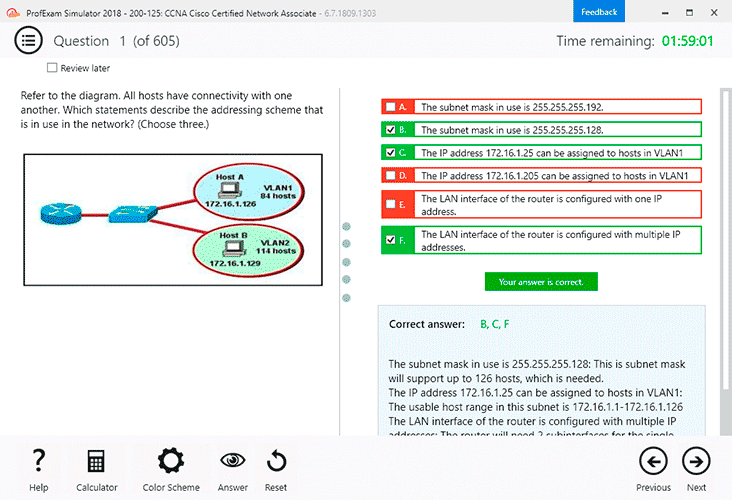

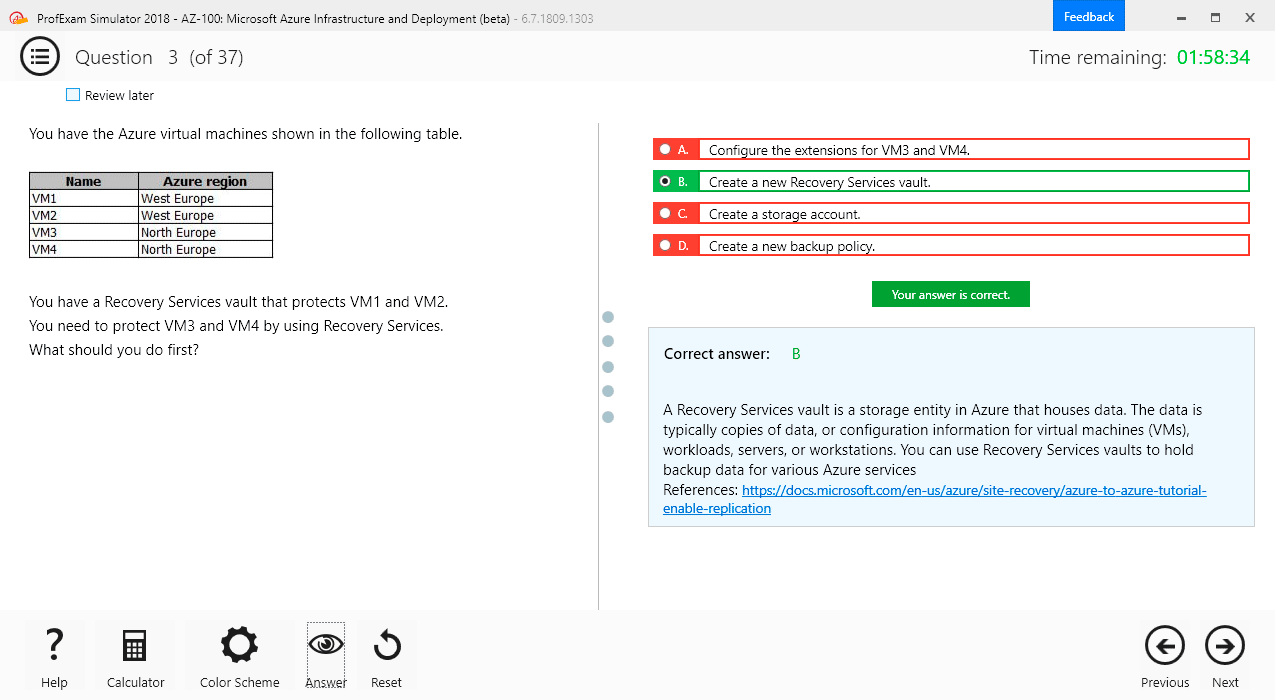

How to open VCEX & EXAM Files?

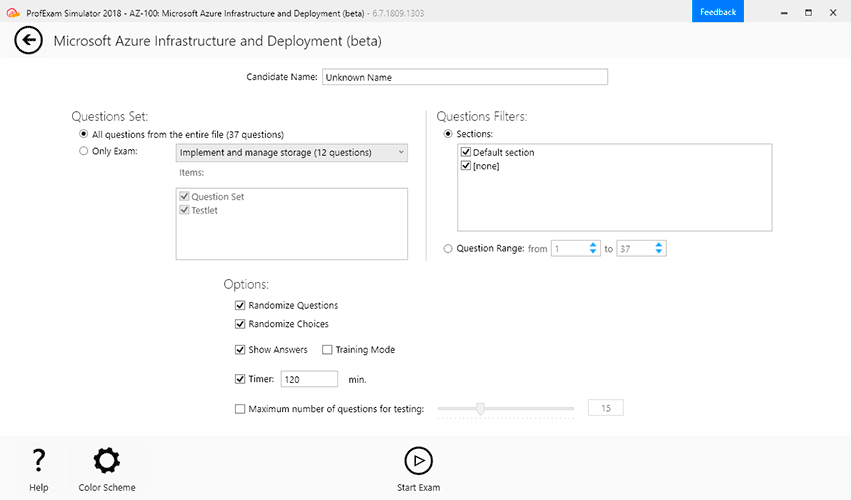

Files with VCEX & EXAM extensions can be opened by ProfExam Simulator.

Coupon: MASTEREXAM

With discount: 20%

Demo Questions

Question 1

Which command does Hadoop offer to discover missing or corrupt HDFS data?

- Hdfs fs –du

- Hdfs fsck

- Dskchk

- The map-only checksum

- Hadoop does not provide any tools todiscover missing or corrupt data; there is not need because three replicas are kept for each data block

Correct answer: B

Question 2

You are migrating a cluster from MApReduce version 1 (MRv1)to MapReduce version 2 (MRv2) on YARN. You want to maintain your MRv1 TaskTracker slot capacities when you migrate. What should you do/

- Configure yarn.applicationmaster.resource.memory-mb and yarn.applicationmaster.resource.cpu-vcores so that ApplicationMaster container allocations match the capacity you require.

- You don’t need to configure or balance these properties in YARN as YARN dynamically balances resource management capabilities on your cluster

- Configure mapred.tasktracker.map.tasks.maximum and mapred.tasktracker.reduce.tasks.maximum ub yarn-site.xml to match your clusters capacity set by the yarn-scheduler.minimum-allocation

- Configure yarn.nodemanager.resource.memory-mb and yarn.nodemanager.resource.cpu-vcores to match the capacity yourequire under YARN for each NodeManager

Correct answer: D

Question 3

Which YARN daemon or service negotiations map and reduce Containers from the Scheduler, tracking their status and monitoring progress?

- NodeManager

- ApplicationMaster

- ApplicationManager

- ResourceManager

Correct answer: B

Question 4

You are running a Hadoop cluster with MapReduce version 2 (MRv2) on YARN. You consistently see that MapReducemap tasks on your cluster are running slowly because of excessive garbage collection of JVM, how do you increase JVM heap size property to 3GB to optimize performance?

- yarn.application.child.java.opts=-Xsx3072m

- yarn.application.child.java.opts=-Xmx3072m

- mapreduce.map.java.opts=-Xms3072m

- mapreduce.map.java.opts=-Xmx3072m

Correct answer: C

Question 5

You have a cluster running with a FIFO scheduler enabled. You submit a large job A to the cluster, which you expect to run for one hour. Then, you submit job B to the cluster, which you expect to run a couple of minutes only.

You submit both jobs with the same priority.

Which two best describes how FIFO Scheduler arbitrates the cluster resources for job and its tasks? (Choose two)

- Because there is a more than a single job on thecluster, the FIFO Scheduler will enforce a limit on the percentage of resources allocated to a particular job at any given time

- Tasks are scheduled on the order of their job submission

- The order of execution of job may vary

- Given job A and submitted in that order, all tasks from job A are guaranteed to finish before all tasks from job B

- The FIFO Scheduler will give, on average, and equal share of the cluster resources over the job lifecycle

- The FIFO Scheduler will pass an exception back to the client when Job B is submitted, since all slots on the cluster are use

Correct answer: AD

Question 6

A slave node in yourcluster has 4 TB hard drives installed (4 x 2TB). The DataNode is configured to store HDFS blocks on all disks. You set the value of the dfs.datanode.du.reserved parameter to 100 GB. How does this alter HDFS block storage?

- 25GB on each hard drive maynot be used to store HDFS blocks

- 100GB on each hard drive may not be used to store HDFS blocks

- All hard drives may be used to store HDFS blocks as long as at least 100 GB in total is available on the node

- A maximum if 100 GB on each hard drive maybe used to store HDFS blocks

Correct answer: C

Question 7

You want to understand more about how users browse your public website. For example, you want to know which pages they visit prior to placing an order. You have a server farm of 200 web servers hosting your website. Which is the most efficient process to gather these web server across logs into your Hadoop cluster analysis?

- Sample the web server logs web servers and copy them into HDFS using curl

- Ingest the server web logs into HDFS using Flume

- Channel these clickstreams into Hadoop using Hadoop Streaming

- Import all user clicks from your OLTP databasesinto Hadoop using Sqoop

- Write a MapReeeduce job with the web servers for mappers and the Hadoop cluster nodes for reducers

Correct answer: B

Explanation:

Apache Flume is a service for streaming logs into Hadoop. Apache Flume is a distributed, reliable, and available service for efficiently collecting, aggregating, and moving large amounts of streaming data into the Hadoop Distributed File System (HDFS). It has a simple and flexible architecture based on streaming data flows; and is robust and fault tolerant with tunable reliability mechanisms for failover and recovery. Apache Flume is a service for streaming logs into Hadoop.

Apache Flume is a distributed, reliable, and available service for efficiently collecting, aggregating, and moving large amounts of streaming data into the Hadoop Distributed File System (HDFS). It has a simple and flexible architecture based on streaming data flows; and is robust and fault tolerant with tunable reliability mechanisms for failover and recovery.

Question 8

Your Hadoop cluster is configuring with HDFS and MapReduce version 2 (MRv2) on YARN. Can you configure a worker node to run a NodeManager daemon but not a DataNode daemon and still have a functional cluster?

- Yes. The daemon will receive data from the NameNode to run Map tasks

- Yes. The daemon will get data from another (non-local) DataNode to run Map tasks

- Yes. The daemon will receive Map tasks only

- Yes. The daemon will receive Reducer tasks only

Correct answer: B

Question 9

You have recently converted your Hadoop cluster from a MapReduce 1 (MRv1) architecture to MapReduce 2 (MRv2) on YARN architecture. Your developers are accustomed to specifying map and reduce tasks (resource allocation) tasks when they run jobs: A developer wants to know how specify to reduce tasks when a specific job runs.

Which method should you tell that developers to implement?

- MapReduce version 2 (MRv2) on YARN abstracts resource allocation away from the idea of tasks into memory and virtual cores, thus eliminating the need for a developer to specify the number of reduce tasks, and indeed preventing the developer from specifying the number of reduce tasks.

- InYARN, resource allocations is a function of megabytes of memory in multiples of 1024mb. Thus, they should specify the amount of memory resource they need by executing D mapreduce-reduces.memory-mb-2048

- In YARN, the ApplicationMaster is responsible forrequesting the resource required for a specific launch. Thus, executing D yarn.applicationmaster.reduce.tasks=2 will specify that the ApplicationMaster launch two task contains on the worker nodes.

- Developers specify reduce tasks in the exact same wayfor both MapReduce version 1 (MRv1) and MapReduce version 2 (MRv2) on YARN. Thus, executing D mapreduce.job.reduces-2 will specify reduce tasks.

- In YARN, resource allocation is function of virtual cores specified by the ApplicationManager making requests tothe NodeManager where a reduce task is handeled by a single container (and thus a single virtual core). Thus, the developer needs to specify the number of virtual cores to the NodeManager by executing p yarn.nodemanager.cpu-vcores=2

Correct answer: D

Question 10

You have A 20 node Hadoop cluster, with 18 slave nodes and 2 master nodes running HDFS High Availability (HA). You want to minimize the chance of data loss in your cluster.

What should you do?

- Add another master node to increase the number of nodes running the JournalNode which increases the number of machines available to HA to create a quorum

- Set an HDFS replication factor that provides data redundancy, protecting against node failure

- Run a Secondary NameNode on a different master from the NameNode in order to provide automatic recovery from a NameNode failure.

- Run the ResourceManager on a different master from the NameNode in order to load- share HDFS metadata processing

- Configure thecluster’s disk drives with an appropriate fault tolerant RAID level

Correct answer: D