File Info

| Exam | Cloudera Certified Developer for Apache Hadoop (CCDH) |

| Number | CCD-410 |

| File Name | Cloudera.CCD-410.ExamsKey.2018-09-15.36q.tqb |

| Size | 226 KB |

| Posted | Sep 15, 2018 |

| Download | Cloudera.CCD-410.ExamsKey.2018-09-15.36q.tqb |

How to open VCEX & EXAM Files?

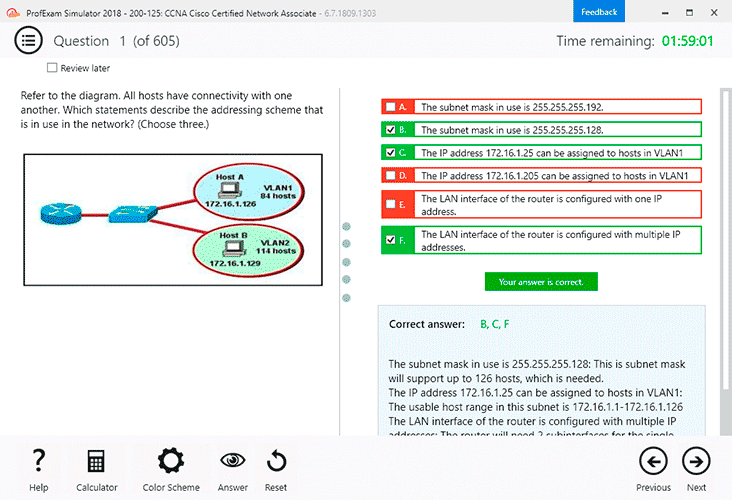

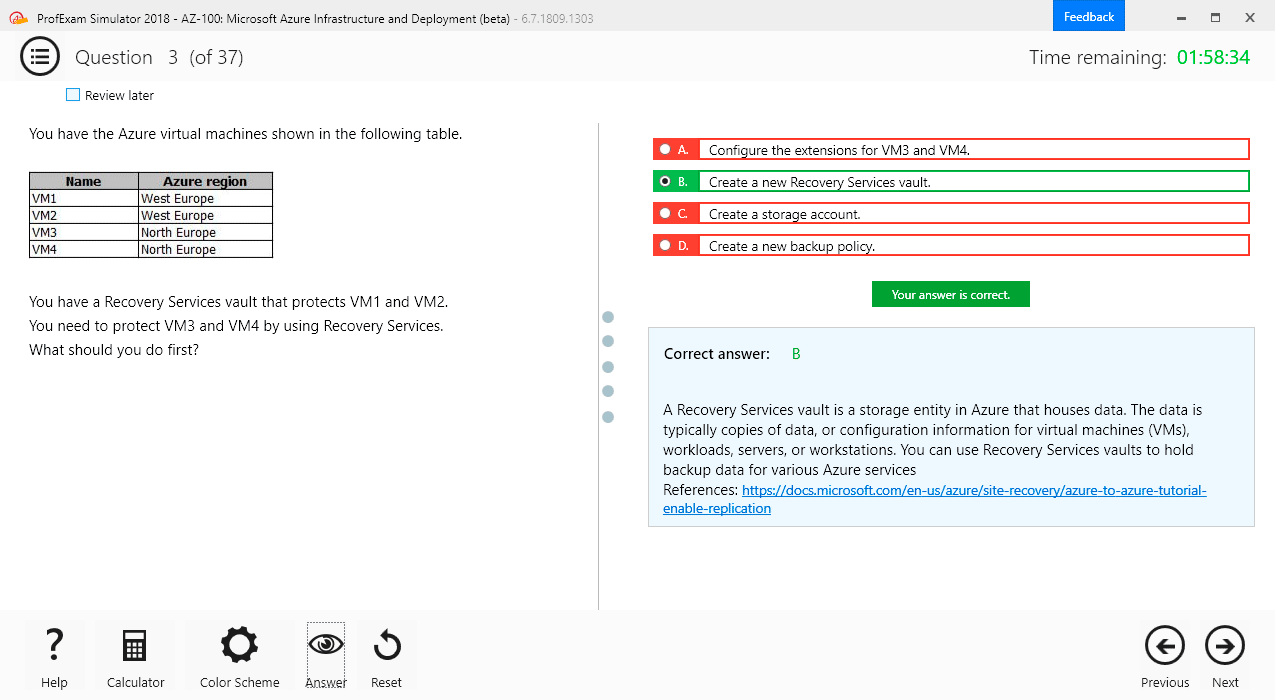

Files with VCEX & EXAM extensions can be opened by ProfExam Simulator.

Coupon: MASTEREXAM

With discount: 20%

Demo Questions

Question 1

Identify the MapReduce v2 (MRv2 / YARN) daemon responsible for launching application containers and monitoring application resource usage?

- ResourceManager

- NodeManager

- ApplicationMaster

- ApplicationMasterService

- TaskTracker

- JobTracker

Correct answer: C

Explanation:

The fundamental idea of MRv2 (YARN) is to split up the two major functionalities of the JobTracker, resource management and job scheduling/monitoring, into separate daemons. The idea is to have a global ResourceManager (RM) and per-application ApplicationMaster (AM). An application is either a single job in the classical sense of Map-Reduce jobs or a DAG of jobs. Note: Let’s walk through an application execution sequence :A client program submits the application, including the necessary specifications to launch the application-specific ApplicationMaster itself. The ResourceManager assumes the responsibility to negotiate a specified container in which to start the ApplicationMaster and then launches the ApplicationMaster. The ApplicationMaster, on boot-up, registers with the ResourceManager – the registration allows the client program to query the ResourceManager for details, which allow it to directly communicate with its own ApplicationMaster. During normal operation the ApplicationMaster negotiates appropriate resource containers via the resource-request protocol. On successful container allocations, the ApplicationMaster launches the container by providing the container launch specification to the NodeManager. The launch specification, typically, includes the necessary information to allow the container to communicate with the ApplicationMaster itself. The application code executing within the container then provides necessary information (progress, status etc.) to its ApplicationMaster via an application-specific protocol. During the application execution, the client that submitted the program communicates directly with the ApplicationMaster to get status, progress updates etc. via an application-specific protocol. Once the application is complete, and all necessary work has been finished, the ApplicationMaster deregisters with the ResourceManager and shuts down, allowing its own container to be repurposed. Reference: Apache Hadoop YARN – Concepts & Applications The fundamental idea of MRv2 (YARN) is to split up the two major functionalities of the JobTracker, resource management and job scheduling/monitoring, into separate daemons. The idea is to have a global ResourceManager (RM) and per-application ApplicationMaster (AM). An application is either a single job in the classical sense of Map-Reduce jobs or a DAG of jobs.

Note: Let’s walk through an application execution sequence :

- A client program submits the application, including the necessary specifications to launch the application-specific ApplicationMaster itself.

- The ResourceManager assumes the responsibility to negotiate a specified container in which to start the ApplicationMaster and then launches the ApplicationMaster.

- The ApplicationMaster, on boot-up, registers with the ResourceManager – the registration allows the client program to query the ResourceManager for details, which allow it to directly communicate with its own ApplicationMaster.

- During normal operation the ApplicationMaster negotiates appropriate resource containers via the resource-request protocol.

- On successful container allocations, the ApplicationMaster launches the container by providing the container launch specification to the NodeManager. The launch specification, typically, includes the necessary information to allow the container to communicate with the ApplicationMaster itself.

- The application code executing within the container then provides necessary information (progress, status etc.) to its ApplicationMaster via an application-specific protocol.

- During the application execution, the client that submitted the program communicates directly with the ApplicationMaster to get status, progress updates etc. via an application-specific protocol.

- Once the application is complete, and all necessary work has been finished, the ApplicationMaster deregisters with the ResourceManager and shuts down, allowing its own container to be repurposed.

Reference: Apache Hadoop YARN – Concepts & Applications

Question 2

Which best describes how TextInputFormat processes input files and line breaks?

- Input file splits may cross line breaks. A line that crosses file splits is read by the RecordReader of the split that contains the beginning of the broken line.

- Input file splits may cross line breaks. A line that crosses file splits is read by the RecordReaders of both splits containing the broken line.

- The input file is split exactly at the line breaks, so each RecordReader will read a series of complete lines.

- Input file splits may cross line breaks. A line that crosses file splits is ignored.

- Input file splits may cross line breaks. A line that crosses file splits is read by the RecordReader of the split that contains the end of the broken line.

Correct answer: E

Explanation:

As the Map operation is parallelized the input file set is first split to several pieces called FileSplits. If an individual file is so large that it will affect seek time it will be split to several Splits. The splitting does not know anything about the input file's internal logical structure, for example line-oriented text files are split on arbitrary byte boundaries. Then a new map task is created per FileSplit. When an individual map task starts it will open a new output writer per configured reduce task. It will then proceed to read its FileSplit using the RecordReader it gets from the specified InputFormat. InputFormat parses the input and generates key-value pairs. InputFormat must also handle records that may be split on the FileSplit boundary. For example TextInputFormat will read the last line of the FileSplit past the split boundary and, when reading other than the first FileSplit, TextInputFormat ignores the content up to the first newline. Reference: How Map and Reduce operations are actually carried out As the Map operation is parallelized the input file set is first split to several pieces called FileSplits. If an individual file is so large that it will affect seek time it will be split to several Splits. The splitting does not know anything about the input file's internal logical structure, for example line-oriented text files are split on arbitrary byte boundaries. Then a new map task is created per FileSplit.

When an individual map task starts it will open a new output writer per configured reduce task. It will then proceed to read its FileSplit using the RecordReader it gets from the specified InputFormat. InputFormat parses the input and generates key-value pairs. InputFormat must also handle records that may be split on the FileSplit boundary. For example TextInputFormat will read the last line of the FileSplit past the split boundary and, when reading other than the first FileSplit, TextInputFormat ignores the content up to the first newline.

Reference: How Map and Reduce operations are actually carried out

Question 3

For each input key-value pair, mappers can emit:

- As many intermediate key-value pairs as designed. There are no restrictions on the types of those key-value pairs (i.e., they can be heterogeneous).

- As many intermediate key-value pairs as designed, but they cannot be of the same type as the input key-value pair.

- One intermediate key-value pair, of a different type.

- One intermediate key-value pair, but of the same type.

- As many intermediate key-value pairs as designed, as long as all the keys have the same types and all the values have the same type.

Correct answer: E

Explanation:

Mapper maps input key/value pairs to a set of intermediate key/value pairs. Maps are the individual tasks that transform input records into intermediate records. The transformed intermediate records do not need to be of the same type as the input records. A given input pair may map to zero or many output pairs. Reference: Hadoop Map-Reduce Tutorial Mapper maps input key/value pairs to a set of intermediate key/value pairs.

Maps are the individual tasks that transform input records into intermediate records. The transformed intermediate records do not need to be of the same type as the input records. A given input pair may map to zero or many output pairs.

Reference: Hadoop Map-Reduce Tutorial