File Info

| Exam | Implementing a SQL Data Warehouse |

| Number | 70-767 |

| File Name | Microsoft.70-767.PremDumps.2019-03-29.62q.tqb |

| Size | 3 MB |

| Posted | Mar 29, 2019 |

| Download | Microsoft.70-767.PremDumps.2019-03-29.62q.tqb |

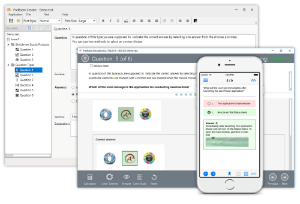

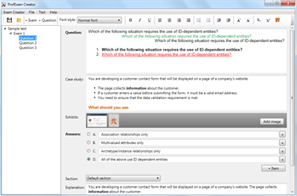

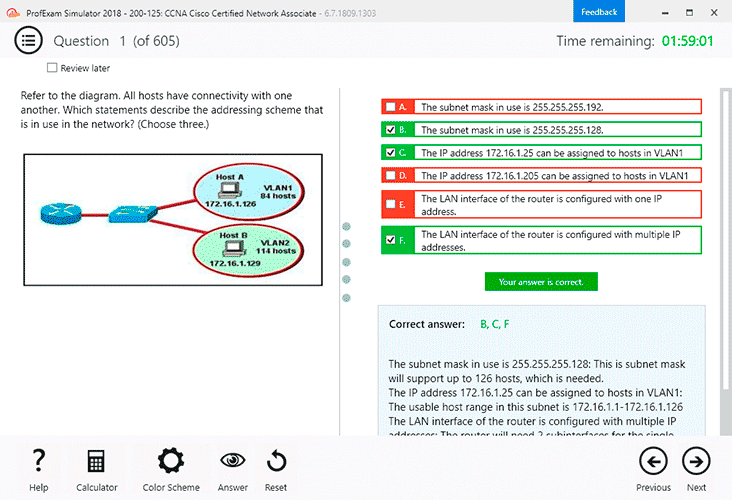

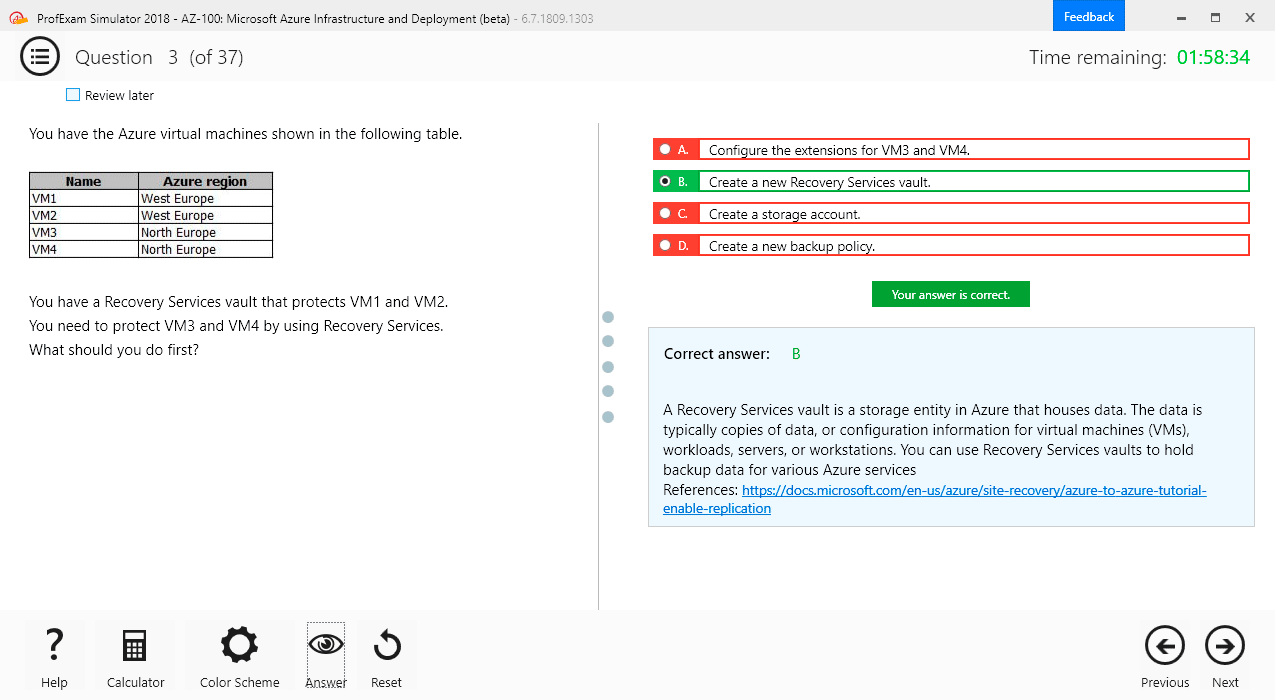

How to open VCEX & EXAM Files?

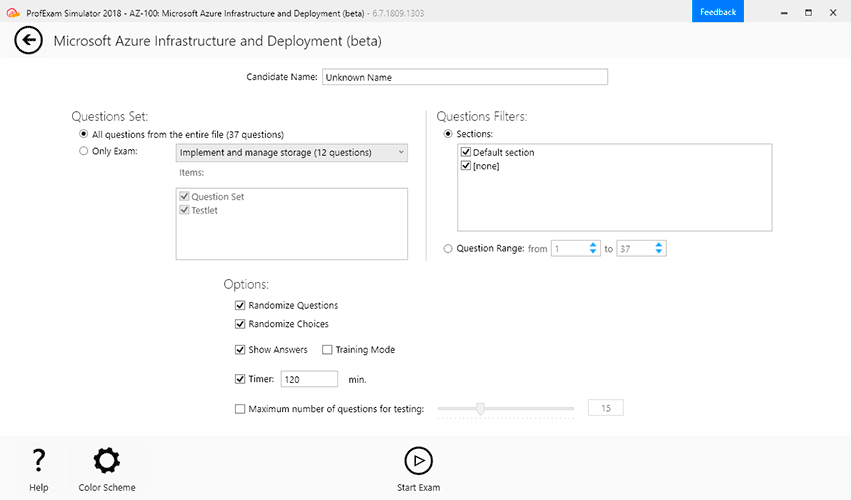

Files with VCEX & EXAM extensions can be opened by ProfExam Simulator.

Coupon: MASTEREXAM

With discount: 20%

Demo Questions

Question 1

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this section, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

You are developing a Microsoft SQL Server Integration Services (SSIS) projects. The project consists of several packages that load data warehouse tables.

You need to extend the control flow design for each package to use the following control flow while minimizing development efforts and maintenance:

Solution: You add the control flow to a control flow package part. You add an instance of the control flow package part to each data warehouse load package.

Does the solution meet the goal?

- Yes

- No

Correct answer: A

Explanation:

A package consists of a control flow and, optionally, one or more data flows. You create the control flow in a package by using the Control Flow tab in SSIS Designer. References: https://docs.microsoft.com/en-us/sql/integration-services/control-flow/control-flow A package consists of a control flow and, optionally, one or more data flows. You create the control flow in a package by using the Control Flow tab in SSIS Designer.

References: https://docs.microsoft.com/en-us/sql/integration-services/control-flow/control-flow

Question 2

Note: This question is part of a series of questions that use the same scenario. For your convenience, the scenario is repeated in each question. Each question presents a different goal and answer choices, but the text of the scenario is exactly the same in each question in this series.

You have a Microsoft SQL Server data warehouse instance that supports several client applications.

The data warehouse includes the following tables: Dimension.SalesTerritory, Dimension.Customer, Dimension.Date, Fact.Ticket, and Fact.Order. The Dimension.SalesTerritory and Dimension.Customer tables are frequently updated. The Fact.Order table is optimized for weekly reporting, but the company wants to change it to daily. The Fact.Order table is loaded by using an ETL process. Indexes have been added to the table over time, but the presence of these indexes slows data loading.

All data in the data warehouse is stored on a shared SAN. All tables are in a database named DB1. You have a second database named DB2 that contains copies of production data for a development environment. The data warehouse has grown and the cost of storage has increased. Data older than one year is accessed infrequently and is considered historical.

You have the following requirements:

- Implement table partitioning to improve the manageability of the data warehouse and to avoid the need to repopulate all transactional data each night. Use a partitioning strategy that is as granular as possible.

- Partition the Fact.Order table and retain a total of seven years of data.

- Partition the Fact.Ticket table and retain seven years of data. At the end of each month, the partition structure must apply a sliding window strategy to ensure that a new partition is available for the upcoming month, and that the oldest month of data is archived and removed.

- Optimize data loading for the Dimension.SalesTerritory, Dimension.Customer, and Dimension.Date tables.

- Incrementally load all tables in the database and ensure that all incremental changes are processed.

- Maximize the performance during the data loading process for the Fact.Order partition.

- Ensure that historical data remains online and available for querying.

- Reduce ongoing storage costs while maintaining query performance for current data.

You are not permitted to make changes to the client applications.

You need to implement the data partitioning strategy.

How should you partition the Fact.Order table?

- Create 17,520 partitions.

- Use a granularity of two days.

- Create 2,557 partitions.

- Create 730 partitions.

Correct answer: C

Explanation:

We create on partition for each day, which means that a granularity of one day is used. 7 years times 365 days is 2,555. Make that 2,557 to provide for leap years. From scenario: Partition the Fact.Order table and retain a total of seven years of data.The Fact.Order table is optimized for weekly reporting, but the company wants to change it to daily. Maximize the performance during the data loading process for the Fact.Order partition. Reference: https://docs.microsoft.com/en-us/azure/sql-data-warehouse/sql-data-warehouse-tables-partition We create on partition for each day, which means that a granularity of one day is used. 7 years times 365 days is 2,555. Make that 2,557 to provide for leap years.

From scenario: Partition the Fact.Order table and retain a total of seven years of data.

The Fact.Order table is optimized for weekly reporting, but the company wants to change it to daily.

Maximize the performance during the data loading process for the Fact.Order partition.

Reference: https://docs.microsoft.com/en-us/azure/sql-data-warehouse/sql-data-warehouse-tables-partition

Question 3

Note: This question is part of a series of questions that use the same scenario. For your convenience, the scenario is repeated in each question. Each question presents a different goal and answer choices, but the text of the scenario is exactly the same in each question in this series.

You have a Microsoft SQL Server data warehouse instance that supports several client applications.

The data warehouse includes the following tables: Dimension.SalesTerritory, Dimension.Customer, Dimension.Date, Fact.Ticket, and Fact.Order. The Dimension.SalesTerritory and Dimension.Customer tables are frequently updated. The Fact.Order table is optimized for weekly reporting, but the company wants to change it to daily. The Fact.Order table is loaded by using an ETL process. Indexes have been added to the table over time, but the presence of these indexes slows data loading.

All data in the data warehouse is stored on a shared SAN. All tables are in a database named DB1. You have a second database named DB2 that contains copies of production data for a development environment. The data warehouse has grown and the cost of storage has increased. Data older than one year is accessed infrequently and is considered historical.

You have the following requirements:

- Implement table partitioning to improve the manageability of the data warehouse and to avoid the need to repopulate all transactional data each night. Use a partitioning strategy that is as granular as possible.

- Partition the Fact.Order table and retain a total of seven years of data.

- Partition the Fact.Ticket table and retain seven years of data. At the end of each month, the partition structure must apply a sliding window strategy to ensure that a new partition is available for the upcoming month, and that the oldest month of data is archived and removed.

- Optimize data loading for the Dimension.SalesTerritory, Dimension.Customer, and Dimension.Date tables.

- Incrementally load all tables in the database and ensure that all incremental changes are processed.

- Maximize the performance during the data loading process for the Fact.Order partition.

- Ensure that historical data remains online and available for querying.

- Reduce ongoing storage costs while maintaining query performance for current data.

You are not permitted to make changes to the client applications.

You need to optimize the storage for the data warehouse.

What change should you make?

- Partition the Fact.Order table, and move historical data to new filegroups on lower-cost storage.

- Create new tables on lower-cost storage, move the historical data to the new tables, and then shrink the database.

- Remove the historical data from the database to leave available space for new data.

- Move historical data to new tables on lower-cost storage.

- Implement row compression for the Fact.Order table.

- Move the index for the Fact.Order table to lower-cost storage.

Correct answer: A

Explanation:

Create the load staging table in the same filegroup as the partition you are loading. Create the unload staging table in the same filegroup as the partition you are deleting. From scenario: The data warehouse has grown and the cost of storage has increased. Data older than one year is accessed infrequently and is considered historical.References: https://blogs.msdn.microsoft.com/sqlcat/2013/09/16/top-10-best-practices-for-building-a-large-scale-relational-data-warehouse/ Create the load staging table in the same filegroup as the partition you are loading.

Create the unload staging table in the same filegroup as the partition you are deleting.

From scenario: The data warehouse has grown and the cost of storage has increased. Data older than one year is accessed infrequently and is considered historical.

References: https://blogs.msdn.microsoft.com/sqlcat/2013/09/16/top-10-best-practices-for-building-a-large-scale-relational-data-warehouse/