File Info

| Exam | Administering Relational Databases on Microsoft Azure |

| Number | DP-300 |

| File Name | Microsoft.DP-300.Dump4Pass.2024-11-11.140q.vcex |

| Size | 8 MB |

| Posted | Nov 11, 2024 |

| Download | Microsoft.DP-300.Dump4Pass.2024-11-11.140q.vcex |

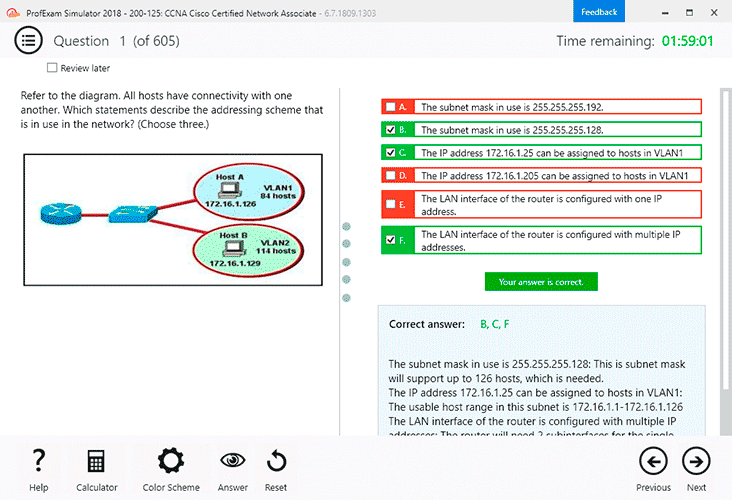

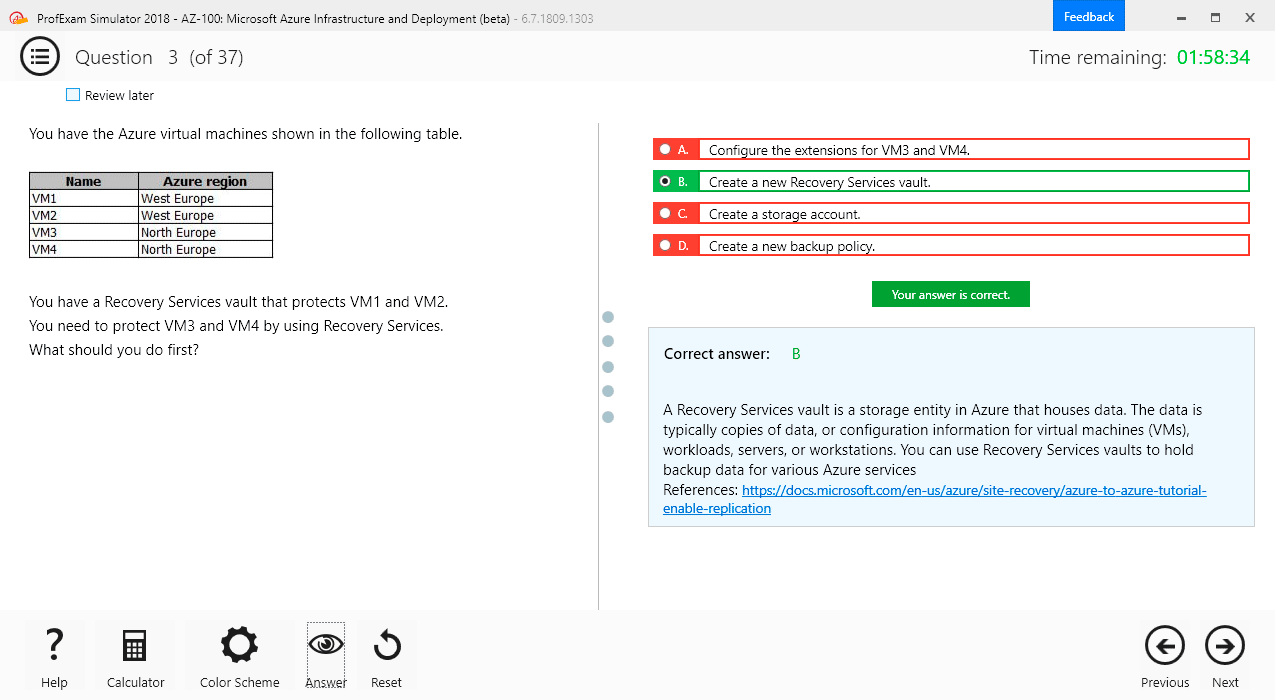

How to open VCEX & EXAM Files?

Files with VCEX & EXAM extensions can be opened by ProfExam Simulator.

Coupon: MASTEREXAM

With discount: 20%

Demo Questions

Question 1

You need to design a data retention solution for the Twitter feed data records. The solution must meet the customer sentiment analytics requirements.

Which Azure Storage functionality should you include in the solution?

- time-basedretention

- changefeed

- lifecyclemanagement

- softdelete

Correct answer: C

Explanation:

The lifecycle management policy lets you: Delete blobs, blob versions, and blob snapshots at the end of their lifecycles Scenario: Purge Twitter feed data records that are older than two years. Store Twitter feeds in Azure Storage by using Event Hubs Capture. The feeds will be converted into Parquet files. Minimize administrative effort to maintain the Twitter feed data records. Incorrect Answers: A: Time-based retention policy support: Users can set policies to store data for a specified interval. When a time-based retention policy is set, blobs can be created and read, but not modified or deleted. After the retention period has expired, blobs can be deleted but not overwritten. Reference: https://docs.microsoft.com/en-us/azure/storage/blobs/storage-lifecycle-management-concepts The lifecycle management policy lets you:

Delete blobs, blob versions, and blob snapshots at the end of their lifecycles

Scenario:

- Purge Twitter feed data records that are older than two years.

- Store Twitter feeds in Azure Storage by using Event Hubs Capture. The feeds will be converted into Parquet files.

- Minimize administrative effort to maintain the Twitter feed data records.

Incorrect Answers:

A: Time-based retention policy support: Users can set policies to store data for a specified interval. When a time-based retention policy is set, blobs can be created and read, but not modified or deleted. After the retention period has expired, blobs can be deleted but not overwritten.

Reference:

https://docs.microsoft.com/en-us/azure/storage/blobs/storage-lifecycle-management-concepts

Question 2

You need to implement the surrogate key for the retail store table. The solution must meet the sales transaction dataset requirements. What should you create?

- a table that has a FOREIGN KEYconstraint

- a table the has an IDENTITYproperty

- a user-defined SEQUENCEobject

- a system-versioned temporaltable

Correct answer: B

Explanation:

Scenario: Contoso requirements for the sales transaction dataset include: Implement a surrogate key to account for changes to the retail store addresses. A surrogate key on a table is a column with a unique identifier for each row. The key is not generated from the table data. Data modelers like to create surrogate keysontheirtableswhentheydesigndatawarehousemodels.YoucanusetheIDENTITYpropertytoachievethisgoalsimplyandeffectivelywithoutaffectingload performance. Reference: https://docs.microsoft.com/en-us/azure/synapse-analytics/sql-data-warehouse/sql-data-warehouse-tables-identity Scenario: Contoso requirements for the sales transaction dataset include:

Implement a surrogate key to account for changes to the retail store addresses.

A surrogate key on a table is a column with a unique identifier for each row. The key is not generated from the table data. Data modelers like to create surrogate

keysontheirtableswhentheydesigndatawarehousemodels.YoucanusetheIDENTITYpropertytoachievethisgoalsimplyandeffectivelywithoutaffectingload performance.

Reference:

https://docs.microsoft.com/en-us/azure/synapse-analytics/sql-data-warehouse/sql-data-warehouse-tables-identity

Question 3

You need to design an analytical storage solution for the transactional data. The solution must meet the sales transaction dataset requirements. What should you include in the solution? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Correct answer: To work with this question, an Exam Simulator is required.

Explanation:

Box 1: Hash Scenario: Ensure that queries joining and filtering sales transaction records based on product ID complete as quickly as possible. A hash distributed table can deliver the highest query performance for joins and aggregations on large tables. Box 2: Round-robin Scenario: You plan to create a promotional table that will contain a promotion ID. The promotion ID will be associated to a specific product. The product will be identified by a product ID. The table will be approximately 5 GB. A round-robin table is the most straightforward table to create and delivers fast performance when used as a staging table for loads. These are some scenarios where you should choose Round robin distribution: When you cannot identify a single key to distribute your data. If your data doesn’t frequently join with data from other tables. When there are no obvious keys to join. Incorrect Answers: Replicated: Replicated tables eliminate the need to transfer data across compute nodes by replicating a full copy of the data of the specified table to each compute node. The best candidates for replicated tables are tables with sizes less than 2 GB compressed and small dimension tables. Reference: https://rajanieshkaushikk.com/2020/09/09/how-to-choose-right-data-distribution-strategy-for-azure-synapse/ Box 1:

Hash

Scenario:

Ensure that queries joining and filtering sales transaction records based on product ID complete as quickly as possible. A hash distributed table can deliver the highest query performance for joins and aggregations on large tables.

Box 2: Round-robin

Scenario:

You plan to create a promotional table that will contain a promotion ID. The promotion ID will be associated to a specific product. The product will be identified by a product ID. The table will be approximately 5 GB.

A round-robin table is the most straightforward table to create and delivers fast performance when used as a staging table for loads. These are some scenarios where you should choose Round robin distribution:

- When you cannot identify a single key to distribute your data.

- If your data doesn’t frequently join with data from other tables.

- When there are no obvious keys to join.

Incorrect Answers:

Replicated: Replicated tables eliminate the need to transfer data across compute nodes by replicating a full copy of the data of the specified table to each compute node. The best candidates for replicated tables are tables with sizes less than 2 GB compressed and small dimension tables.

Reference:

https://rajanieshkaushikk.com/2020/09/09/how-to-choose-right-data-distribution-strategy-for-azure-synapse/

Question 4

You have 20 Azure SQL databases provisioned by using the vCore purchasing model. You plan to create an Azure SQL Database elastic pool and add the 20 databases.

Which three metrics should you use to size the elastic pool to meet the demands of your workload? Each correct answer presents part of the solution.

NOTE: Each correct selection is worth one point.

- total size of all thedatabases

- geo-replicationsupport

- number of concurrently peaking databases * peak CPU utilization perdatabase

- maximum number of concurrent sessions for all the databases

- total number of databases * average CPU utilization perdatabase

Correct answer: ACE

Explanation:

CE: Estimate the vCores needed for the pool as follows: For vCore-based purchasing model: MAX(<Total number of DBs X average vCore utilization per DB>, <Number of concurrently peaking DBs X Peak vCore utilization per DB) A: Estimate the storage space needed for the pool by adding the number of bytes needed for all the databases in the pool. Reference: https://docs.microsoft.com/en-us/azure/azure-sql/database/elastic-pool-overviewconfirmed CE: Estimate the vCores needed for the pool as follows:

For vCore-based purchasing model: MAX(<Total number of DBs X average vCore utilization per DB>, <Number of concurrently peaking DBs X Peak vCore utilization per DB)

A: Estimate the storage space needed for the pool by adding the number of bytes needed for all the databases in the pool.

Reference:

https://docs.microsoft.com/en-us/azure/azure-sql/database/elastic-pool-overviewconfirmed

Question 5

You have SQL Server 2019 on an Azure virtual machine that contains an SSISDB database. A recent failure causes the master database to be lost.

You discover that all Microsoft SQL Server integration Services (SSIS) packages fail to run on the virtual machine.

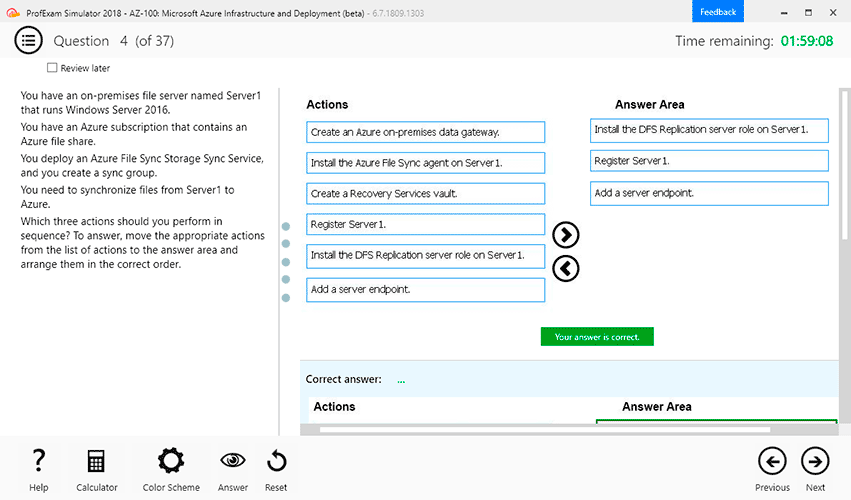

Which four actions should you perform in sequence to resolve the issue? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct.

Correct answer: To work with this question, an Exam Simulator is required.

Explanation:

Step 1: Attach the SSISDB database Step 2: Turn on the TRUSTWORTHY property and the CLR property If you are restoring the SSISDB database to an SQL Server instance where the SSISDB catalog was never created, enable common language runtime (clr) Step 3: Open the master key for the SSISDB database Restore the master key by this method if you have the original password that was used to create SSISDB. open master key decryption by password = 'LS1Setup!' --'Password used when creating SSISDB' Alter Master Key Add encryption by Service Master Key Step 4: Encrypt a copy of the mater key by using the service master key Reference: https://docs.microsoft.com/en-us/sql/integration-services/backup-restore-and-move-the-ssis-catalog Step 1: Attach the SSISDB database

Step 2: Turn on the TRUSTWORTHY property and the CLR property

If you are restoring the SSISDB database to an SQL Server instance where the SSISDB catalog was never created, enable common language runtime (clr)

Step 3: Open the master key for the SSISDB database

Restore the master key by this method if you have the original password that was used to create SSISDB.

open master key decryption by password = 'LS1Setup!' --'Password used when creating SSISDB'

Alter Master Key Add encryption by Service Master Key

Step 4: Encrypt a copy of the mater key by using the service master key

Reference:

https://docs.microsoft.com/en-us/sql/integration-services/backup-restore-and-move-the-ssis-catalog

Question 6

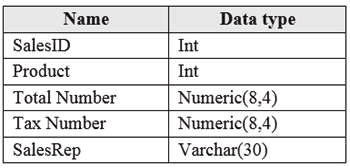

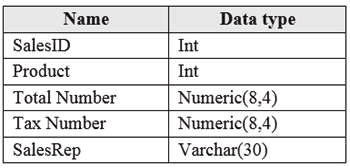

You have an Azure SQL database that contains a table named factSales. FactSales contains the columns shown in the following table.

FactSales has 6 billion rows and is loaded nightly by using a batch process. You must provide the greatest reduction in space for the database and maximize performance.

Which type of compression provides the greatest space reduction for the database?

- page compression

- row compression

- columnstore compression

- colum nstore archival compression

Correct answer: D

Explanation:

Columnstore tables and indexes are always stored with columnstore compression. You can further reduce the size of columnstore data by configuring an additional compression called archival compression. Note:Columnstore—Thecolumnstoreindexisalsologicallyorganizedasatablewithrowsandcolumns,butthedataisphysicallystoredinacolumn-wisedata format. Incorrect Answers: B: Rowstore — The rowstore index is the traditional style that has been around since the initial release of SQL Server. For rowstore tables and indexes, use the data compression feature to help reduce the size of the database. Reference: https://docs.microsoft.com/en-us/sql/relational-databases/data-compression/data-compression Columnstore tables and indexes are always stored with columnstore compression. You can further reduce the size of columnstore data by configuring an additional compression called archival compression.

Note:Columnstore—Thecolumnstoreindexisalsologicallyorganizedasatablewithrowsandcolumns,butthedataisphysicallystoredinacolumn-wisedata format.

Incorrect Answers:

B: Rowstore — The rowstore index is the traditional style that has been around since the initial release of SQL

Server. For rowstore tables and indexes, use the data compression feature to help reduce the size of the database.

Reference:

https://docs.microsoft.com/en-us/sql/relational-databases/data-compression/data-compression

Question 7

You have a Microsoft SQL Server 2019 database named DB1 that uses the following database-level and instance-level features.

- Clustered columnstore indexes

- Automatic tuning

- Change tracking

- PolyBase

You plan to migrate DB1 to an Azure SQL database.

What feature should be removed or replaced before DB1 can be migrated?

- Clustered columnstoreindexes

- PolyBase

- Changetracking

- Automatictuning

Correct answer: B

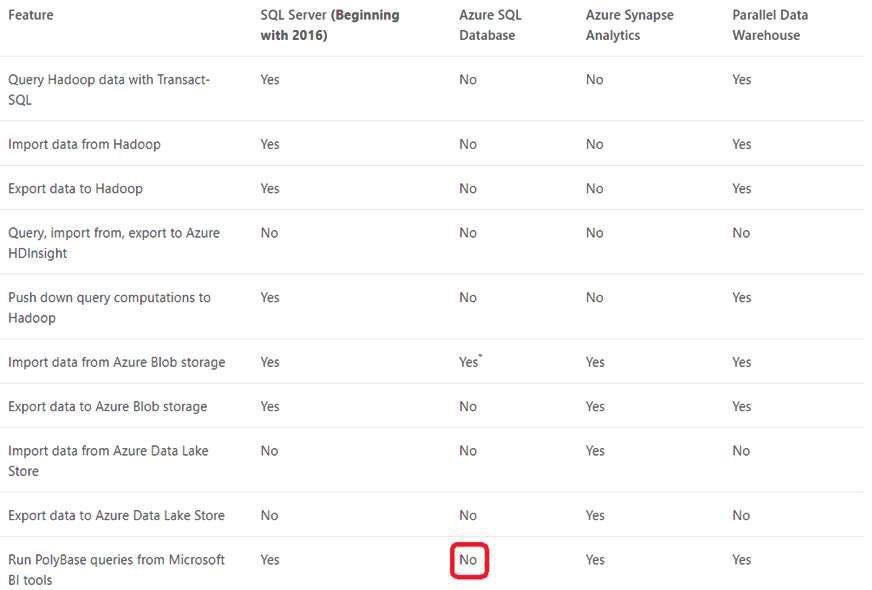

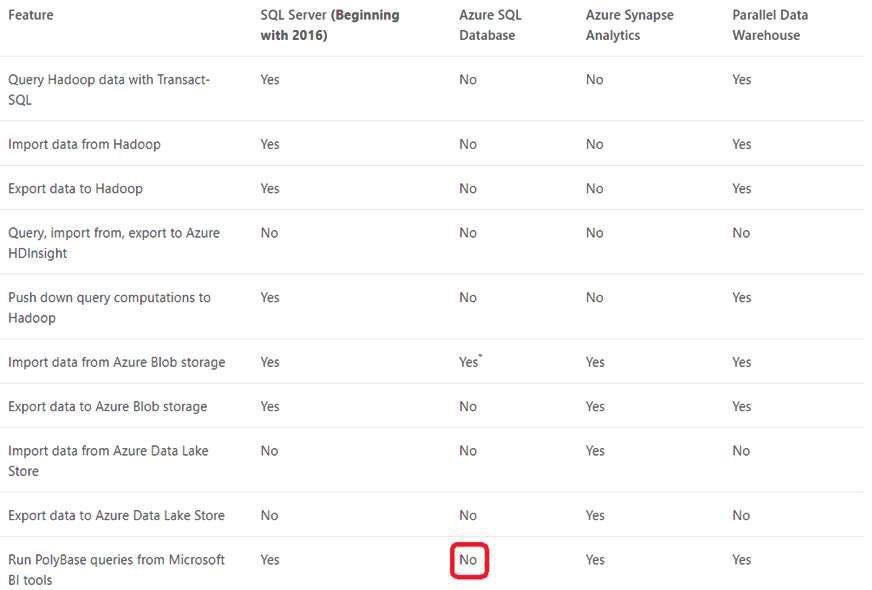

Explanation:

This table lists the key features for PolyBase and the products in which they're available. Incorrect Answers: C: Change tracking is a lightweight solution that provides an efficient change tracking mechanism for applications. It applies to both Azure SQL Database and SQL Server. D: Azure SQL Database and Azure SQL Managed Instance automatic tuning provides peak performance and stable workloads through continuous performance tuning based on AI and machine learning. Reference: https://docs.microsoft.com/en-us/sql/relational-databases/polybase/polybase-versioned-feature-summary This table lists the key features for PolyBase and the products in which they're available.

Incorrect Answers:

C: Change tracking is a lightweight solution that provides an efficient change tracking mechanism for applications. It applies to both Azure SQL Database and SQL Server.

D: Azure SQL Database and Azure SQL Managed Instance automatic tuning provides peak performance and stable workloads through continuous performance tuning based on AI and machine learning.

Reference:

https://docs.microsoft.com/en-us/sql/relational-databases/polybase/polybase-versioned-feature-summary

Question 8

You have a Microsoft SQL Server 2019 instance in an on-premises datacenter. The instance contains a 4-TB database named DB1. You plan to migrate DB1 to an Azure SQL Database managed instance.

What should you use to minimize downtime and data loss during the migration?

- distributed availabilitygroups

- databasemirroring

- Always On AvailabilityGroup

- Azure Database MigrationService

Correct answer: D

Question 9

You have an on-premises Microsoft SQL Server 2016 server named Server1 that contains a database named DB1.

You need to perform an online migration of DB1 to an Azure SQL Database managed instance by using Azure Database Migration Service. How should you configure the backup of DB1? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Correct answer: To work with this question, an Exam Simulator is required.

Explanation:

Box 1: Full and log backups only Make sure to take every backup on a separate backup media (backup files). Azure Database Migration Service doesn't support backups that are appended to a single backup file. Take full backup and log backups to separate backup files. Box 2: WITH CHECKSUM Azure Database Migration Service uses the backup and restore method to migrate your on-premises databases to SQL Managed Instance. Azure Database Migration Service only supports backups created using checksum. Incorrect Answers: NOINIT Indicates that the backup set is appended to the specified media set, preserving existing backup sets. If a media password is defined for the media set, the password must be supplied. NOINIT is the default. UNLOAD Specifies that the tape is automatically rewound and unloaded when the backup is finished. UNLOAD is the default when a session begins. Reference: https://docs.microsoft.com/en-us/azure/dms/known-issues-azure-sql-db-managed-instance-onlineconfimred Box 1: Full and log backups only

Make sure to take every backup on a separate backup media (backup files). Azure Database Migration Service doesn't support backups that are appended to a single backup file. Take full backup and log backups to separate backup files.

Box 2: WITH CHECKSUM

Azure Database Migration Service uses the backup and restore method to migrate your on-premises databases to SQL Managed Instance. Azure Database Migration Service only supports backups created using checksum.

Incorrect Answers:

NOINIT Indicates that the backup set is appended to the specified media set, preserving existing backup sets. If a media password is defined for the media set, the password must be supplied. NOINIT is the default.

UNLOAD

Specifies that the tape is automatically rewound and unloaded when the backup is finished. UNLOAD is the default when a session begins.

Reference:

https://docs.microsoft.com/en-us/azure/dms/known-issues-azure-sql-db-managed-instance-onlineconfimred

Question 10

You have a resource group named App1Dev that contains an Azure SQL Database server named DevServer1. DevServer1 contains an Azure SQL database named DB1. The schema and permissions for DB1 are saved in a Microsoft SQL Server Data Tools (SSDT) database project.

You need to populate a new resource group named App1Test with the DB1 database and an Azure SQL Server named TestServer1. The resources in App1Test must have the same configurations as the resources in App1Dev.

Which four actions should you perform in sequence? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order.

Correct answer: To work with this question, an Exam Simulator is required.

Explanation:

confirmed confirmed