File Info

| Exam | Upgrade to Oracle Database 12c |

| Number | 1z0-060 |

| File Name | Oracle.1z0-060.PracticeTest.2019-01-29.99q.vcex |

| Size | 1 MB |

| Posted | Jan 29, 2019 |

| Download | Oracle.1z0-060.PracticeTest.2019-01-29.99q.vcex |

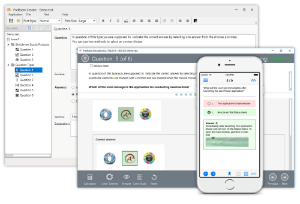

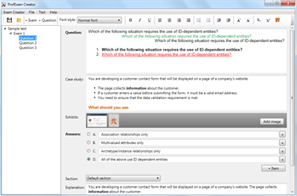

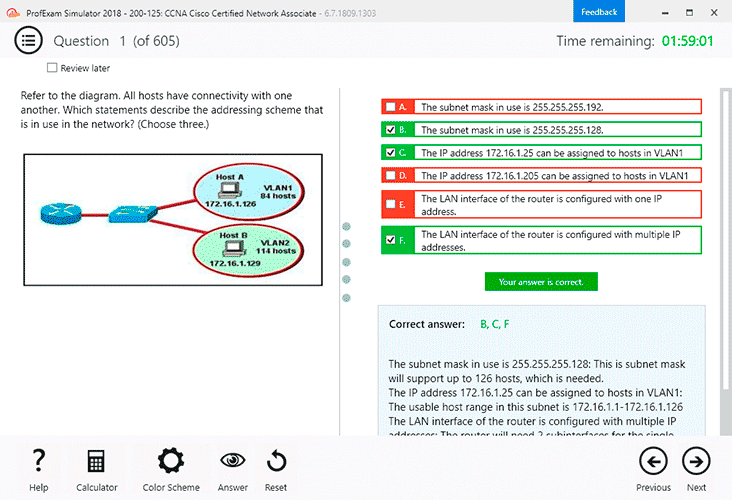

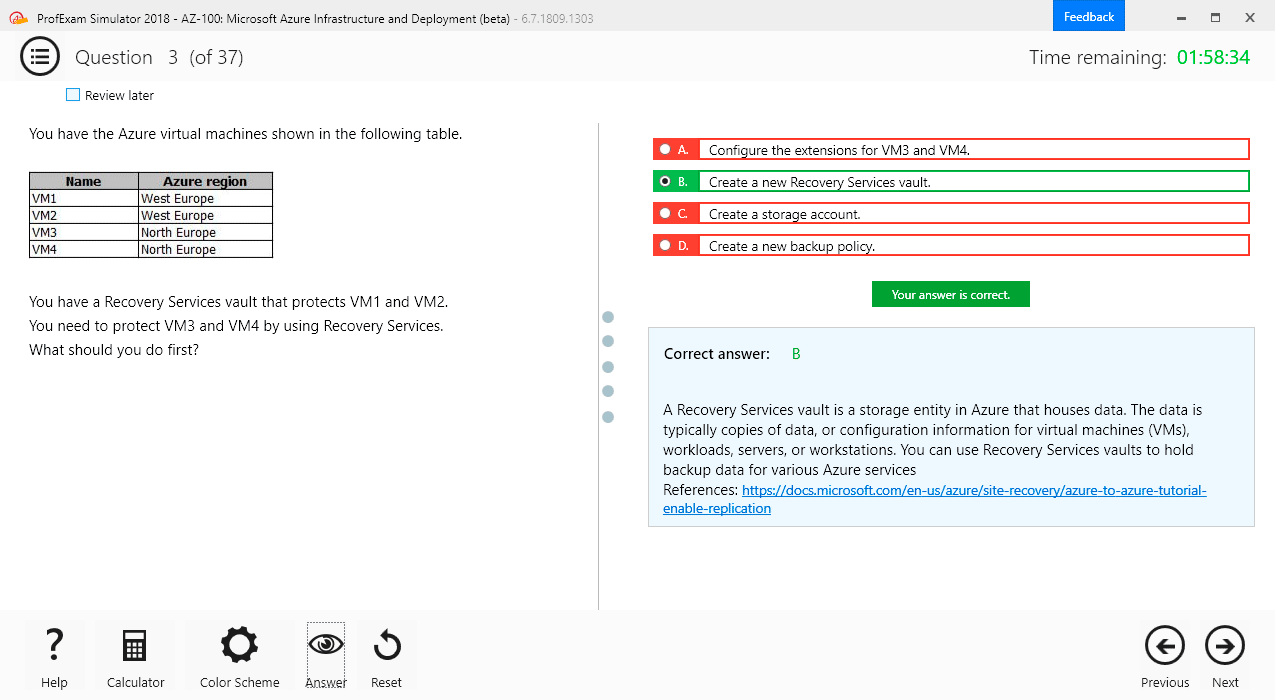

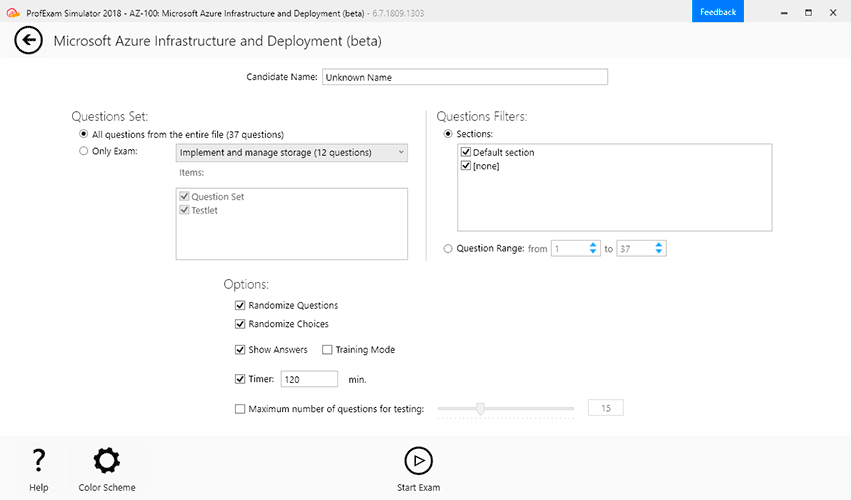

How to open VCEX & EXAM Files?

Files with VCEX & EXAM extensions can be opened by ProfExam Simulator.

Coupon: MASTEREXAM

With discount: 20%

Demo Questions

Question 1

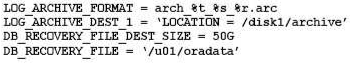

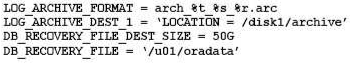

Your database is running an ARCHIVELOG mode.

The following parameters are set in your database instance:

Which statement is true about the archived redo log files?

- They are created only in the location specified by the LOG_ARCHIVE_DEST_1 parameter.

- They are created only in the Fast Recovery Area because configuring the DB_RECOVERY_FILE_DEST and DB_RECOVERY_FILE_DEST_SIZE parameters automatically enables flashback for the database.

- They are created in the location specified by the LOG_ARCHIVE_DEST_1 parameter and in the default location $ORACLE_HOME/dbs/arch.

- They are created in the location specified by the LOG_ARCHIVE_DEST_1 parameter and in the location specified by the DB_RECOVERY_FILE_DEST parameter.

Correct answer: A

Explanation:

You can choose to archive redo logs to a single destination or to multiple destinations. Destinations can be local—within the local file system or an Oracle Automatic Storage Management (Oracle ASM) disk group—or remote (on a standby database). When you archive to multiple destinations, a copy of each filled redo log file is written to each destination. These redundant copies help ensure that archived logs are always available in the event of a failure at one of the destinations. To archive to only a single destination, specify that destination using the LOG_ARCHIVE_DEST and LOG_ARCHIVE_DUPLEX_DEST initialization parameters. ARCHIVE_DEST initialization parameter. To archive to multiple destinations, you can choose to archive to two or more locations using the LOG_ARCHIVE_DEST_n initialization parameters, or to archive only to a primary and secondary destination using the LOG_ARCHIVE_DEST and LOG_ARCHIVE_DUPLEX_DEST initialization parameters. You can choose to archive redo logs to a single destination or to multiple destinations.

Destinations can be local—within the local file system or an Oracle Automatic Storage Management (Oracle ASM) disk group—or remote (on a standby database).

When you archive to multiple destinations, a copy of each filled redo log file is written to each destination. These redundant copies help ensure that archived logs are always available in the event of a failure at one of the destinations.

To archive to only a single destination, specify that destination using the LOG_ARCHIVE_DEST and LOG_ARCHIVE_DUPLEX_DEST initialization parameters.

ARCHIVE_DEST initialization parameter. To archive to multiple destinations, you can choose to archive to two or more locations using the

LOG_ARCHIVE_DEST_n initialization parameters, or to archive only to a primary and secondary destination using the LOG_ARCHIVE_DEST and

LOG_ARCHIVE_DUPLEX_DEST initialization parameters.

Question 2

Your multitenant container database (CDB) contains three pluggable database (PDBs). You find that the control file is damaged. You plan to use RMAN to recover the control file. There are no startup triggers associated with the PDBs.

Which three steps should you perform to recover the control file and make the database fully operational? (Choose three.)

- Mount the container database (CDB) and restore the control file from the control file autobackup.

- Recover and open the CDB in NORMAL mode.

- Mount the CDB and then recover and open the database, with the RESETLOGS option.

- Open all the pluggable databases.

- Recover each pluggable database.

- Start the database instance in the nomount stage and restore the control file from control file autobackup.

Correct answer: CDF

Explanation:

Step 1: Start the database instance in the nomount stage and restore the control file from control file auto backupStep 2: Open all the pluggable databases.Step 3: If all copies of the current control file are lost or damaged, then you must restore and mount a backup control file. You must then run the RECOVER command, even if no data files have been restored, and open the database with the RESETLOGS option. Note:* RMAN and Oracle Enterprise Manager Cloud Control (Cloud Control) provide full support for backup and recovery in a multitenant environment. You can back up and recover a whole multitenant container database (CDB), root only, or one or more pluggable databases (PDBs). Step 1: Start the database instance in the nomount stage and restore the control file from control file auto backup

Step 2: Open all the pluggable databases.

Step 3: If all copies of the current control file are lost or damaged, then you must restore and mount a backup control file. You must then run the RECOVER command, even if no data files have been restored, and open the database with the RESETLOGS option.

Note:

* RMAN and Oracle Enterprise Manager Cloud Control (Cloud Control) provide full support for backup and recovery in a multitenant environment. You can back up and recover a whole multitenant container database (CDB), root only, or one or more pluggable databases (PDBs).

Question 3

A new report process containing a complex query is written, with high impact on the database. You want to collect basic statistics about query, such as the level of parallelism, total database time, and the number of I/O requests.

For the database instance STATISTICS_LEVEL, the initialization parameter is set to TYPICAL and the CONTROL_MANAGEMENT_PACK_ACCESS parameter is set to DIAGNOSTIC+TUNING.

What should you do to accomplish this task?

- Execute the query and view Active Session History (ASH) for information about the query.

- Enable SQL trace for the query.

- Create a database operation, execute the query, and use the DBMS_SQL_MONITOR.REPORT_SQL_MONITOR function to view the report.

- Use the DBMS_APPLICATION_INFO.SET_SESSION_LONGOPS procedure to monitor query execution and view the information from the V$SESSION_LONGOPS view.

Correct answer: C

Explanation:

The REPORT_SQL_MONITOR function is used to return a SQL monitoring report for a specific SQL statement. Incorrect Answers:A: Not interested in session statistics, only in statistics for the particular SQL query.B: We are interested in statistics, not tracing.D: SET_SESSION_LONGOPS ProcedureThis procedure sets a row in the V$SESSION_LONGOPS view. This is a view that is used to indicate the on-going progress of a long running operation. Some Oracle functions, such as parallel execution and Server Managed Recovery, use rows in this view to indicate the status of, for example, a database backup. Applications may use the SET_SESSION_LONGOPS procedure to advertise information on the progress of application specific long running tasks so that the progress can be monitored by way of the V$SESSION_LONGOPS view. The REPORT_SQL_MONITOR function is used to return a SQL monitoring report for a specific SQL statement.

Incorrect Answers:

A: Not interested in session statistics, only in statistics for the particular SQL query.

B: We are interested in statistics, not tracing.

D: SET_SESSION_LONGOPS Procedure

This procedure sets a row in the V$SESSION_LONGOPS view. This is a view that is used to indicate the on-going progress of a long running operation. Some Oracle functions, such as parallel execution and Server Managed Recovery, use rows in this view to indicate the status of, for example, a database backup.

Applications may use the SET_SESSION_LONGOPS procedure to advertise information on the progress of application specific long running tasks so that the progress can be monitored by way of the V$SESSION_LONGOPS view.

Question 4

Identify two valid options for adding a pluggable database (PDB) to an existing multitenant container database (CDB). (Choose two.)

- Use the CREATE PLUGGABLE DATABASE statement to create a PDB using the files from the SEED.

- Use the CREATE DATABASE ... ENABLE PLUGGABLE DATABASE statement to provision a PDB by copying file from the SEED.

- Use the DBMS_PDB package to clone an existing PDB.

- Use the DBMS_PDB package to plug an Oracle 12c non-CDB database into an existing CDB.

- Use the DBMS_PDB package to plug an Oracle 11g Release 2 (11.2.0.3.0) non-CDB database into an existing CDB.

Correct answer: AD

Question 5

Your database supports a DSS workload that involves the execution of complex queries: Currently, the library cache contains the ideal workload for analysis. You want to analyze some of the queries for an application that are cached in the library cache.

What must you do to receive recommendations about the efficient use of indexes and materialized views to improve query performance?

- Create a SQL Tuning Set (STS) that contains the queries cached in the library cache and run the SQL Tuning Advisor (STA) on the workload captured in the STS.

- Run the Automatic Workload Repository (AWR) report.

- Create an STS that contains the queries cached in the library cache and run the SQL Performance Analyzer (SPA) on the workload captured in the STS.

- Create an STS that contains the queries cached in the library cache and run the SQL Access Advisor on the workload captured in the STS.

- Run the Automatic Database Diagnostic Monitor (ADDM).

Correct answer: D

Explanation:

* SQL Access Advisor is primarily responsible for making schema modification recommendations, such as adding or dropping indexes and materialized views. SQL Tuning Advisor makes other types of recommendations, such as creating SQL profiles and restructuring SQL statements. * The query optimizer can also help you tune SQL statements. By using SQL Tuning Advisor and SQL Access Advisor, you can invoke the query optimizer in advisory mode to examine a SQL statement or set of statements and determine how to improve their efficiency. SQL Tuning Advisor and SQL Access Advisor can make various recommendations, such as creating SQL profiles, restructuring SQL statements, creating additional indexes or materialized views, and refreshing optimizer statistics. Note:* Decision support system (DSS) workload * The library cache is a shared pool memory structure that stores executable SQL and PL/SQL code. This cache contains the shared SQL and PL/SQL areas and control structures such as locks and library cache handles. * SQL Access Advisor is primarily responsible for making schema modification recommendations, such as adding or dropping indexes and materialized views. SQL Tuning Advisor makes other types of recommendations, such as creating SQL profiles and restructuring SQL statements.

* The query optimizer can also help you tune SQL statements. By using SQL Tuning Advisor and SQL Access Advisor, you can invoke the query optimizer in advisory mode to examine a SQL statement or set of statements and determine how to improve their efficiency. SQL Tuning Advisor and SQL Access Advisor can make various recommendations, such as creating SQL profiles, restructuring SQL statements, creating additional indexes or materialized views, and refreshing optimizer statistics.

Note:

* Decision support system (DSS) workload

* The library cache is a shared pool memory structure that stores executable SQL and PL/SQL code. This cache contains the shared SQL and PL/SQL areas and control structures such as locks and library cache handles.

Question 6

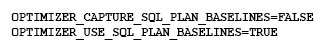

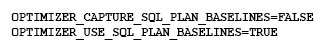

The following parameters are set for your Oracle 12c database instance:

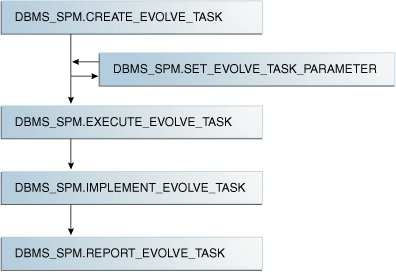

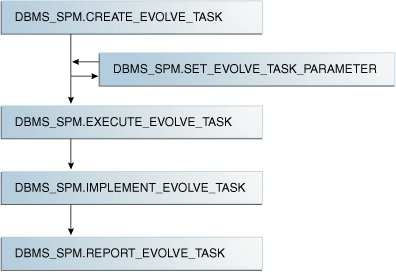

You want to manage the SQL plan evolution task manually. Examine the following steps:

- Set the evolve task parameters.

- Create the evolve task by using the DBMS_SPM.CREATE_EVOLVE_TASK function.

- Implement the recommendations in the task by using the DBMS_SPM.IMPLEMENT_EVOLVE_TASK function.

- Execute the evolve task by using the DBMS_SPM.EXECUTE_EVOLVE_TASK function.

- Report the task outcome by using the DBMS_SPM.REPORT_EVOLVE_TASK function.

Identify the correct sequence of steps:

- 2, 4, 5

- 2, 1, 4, 3, 5

- 1, 2, 3, 4, 5

- 1, 2, 4, 5

Correct answer: B

Explanation:

* Evolving SQL Plan Baselines 2. Create the evolve task by using the DBMS_SPM.CREATE_EVOLVE_TASK function. This function creates an advisor task to prepare the plan evolution of one or more plans for a specified SQL statement. The input parameters can be a SQL handle, plan name or a list of plan names, time limit, task name, and description. 1. Set the evolve task parameters. SET_EVOLVE_TASK_PARAMETER This function updates the value of an evolve task parameter. In this release, the only valid parameter is TIME_LIMIT. 4. Execute the evolve task by using the DBMS_SPM.EXECUTE_EVOLVE_TASK function. This function executes an evolution task. The input parameters can be the task name, execution name, and execution description. If not specified, the advisor generates the name, which is returned by the function. 3: IMPLEMENT_EVOLVE_TASKThis function implements all recommendations for an evolve task. Essentially, this function is equivalent to using ACCEPT_SQL_PLAN_BASELINE for all recommended plans. Input parameters include task name, plan name, owner name, and execution name. 5. Report the task outcome by using the DBMS_SPM_EVOLVE_TASK function. This function displays the results of an evolve task as a CLOB. Input parameters include the task name and section of the report to include. * Evolving SQL Plan Baselines

2. Create the evolve task by using the DBMS_SPM.CREATE_EVOLVE_TASK function.

This function creates an advisor task to prepare the plan evolution of one or more plans for a specified SQL statement. The input parameters can be a SQL handle, plan name or a list of plan names, time limit, task name, and description.

1. Set the evolve task parameters.

SET_EVOLVE_TASK_PARAMETER

This function updates the value of an evolve task parameter. In this release, the only valid parameter is TIME_LIMIT.

4. Execute the evolve task by using the DBMS_SPM.EXECUTE_EVOLVE_TASK function.

This function executes an evolution task. The input parameters can be the task name, execution name, and execution description. If not specified, the advisor generates the name, which is returned by the function.

3: IMPLEMENT_EVOLVE_TASK

This function implements all recommendations for an evolve task. Essentially, this function is equivalent to using ACCEPT_SQL_PLAN_BASELINE for all recommended plans. Input parameters include task name, plan name, owner name, and execution name.

5. Report the task outcome by using the DBMS_SPM_EVOLVE_TASK function.

This function displays the results of an evolve task as a CLOB. Input parameters include the task name and section of the report to include.

Question 7

In a recent Automatic Workload Repository (AWR) report for your database, you notice a high number of buffer busy waits. The database consists of locally managed tablespaces with free list managed segments.

On further investigation, you find that buffer busy waits is caused by contention on data blocks.

Which option would you consider first to decrease the wait event immediately?

- Decreasing PCTUSED

- Decreasing PCTFREE

- Increasing the number of DBWN process

- Using Automatic Segment Space Management (ASSM)

- Increasing db_buffer_cache based on the V$DB_CACHE_ADVICE recommendation

Correct answer: D

Explanation:

* Automatic segment space management (ASSM) is a simpler and more efficient way of managing space within a segment. It completely eliminates any need to specify and tune the pctused,freelists, and freelist groups storage parameters for schema objects created in the tablespace. If any of these attributes are specified, they are ignored. * Oracle introduced Automatic Segment Storage Management (ASSM) as a replacement for traditional freelists management which used one-way linked-lists to manage free blocks with tables and indexes. ASSM is commonly called "bitmap freelists" because that is how Oracle implement the internal data structures for free block management. Note:* Buffer busy waits are most commonly associated with segment header contention onside the data buffer pool (db_cache_size, etc.). * The most common remedies for high buffer busy waits include database writer (DBWR) contention tuning, adding freelists (or ASSM), and adding missing indexes. * Automatic segment space management (ASSM) is a simpler and more efficient way of managing space within a segment. It completely eliminates any need to specify and tune the pctused,freelists, and freelist groups storage parameters for schema objects created in the tablespace. If any of these attributes are specified, they are ignored.

* Oracle introduced Automatic Segment Storage Management (ASSM) as a replacement for traditional freelists management which used one-way linked-lists to manage free blocks with tables and indexes. ASSM is commonly called "bitmap freelists" because that is how Oracle implement the internal data structures for free block management.

Note:

* Buffer busy waits are most commonly associated with segment header contention onside the data buffer pool (db_cache_size, etc.).

* The most common remedies for high buffer busy waits include database writer (DBWR) contention tuning, adding freelists (or ASSM), and adding missing indexes.

Question 8

Examine this command:

SQL > exec DBMS_STATS.SET_TABLE_PREFS ('SH', 'CUSTOMERS', 'PUBLISH', 'false');

Which three statements are true about the effect of this command? (Choose three.)

- Statistics collection is not done for the CUSTOMERS table when schema stats are gathered.

- Statistics collection is not done for the CUSTOMERS table when database stats are gathered.

- Any existing statistics for the CUSTOMERS table are still available to the optimizer at parse time.

- Statistics gathered on the CUSTOMERS table when schema stats are gathered are stored as pending statistics.

- Statistics gathered on the CUSTOMERS table when database stats are gathered are stored as pending statistics.

Correct answer: CDE

Explanation:

* SET_TABLE_PREFS Procedure This procedure is used to set the statistics preferences of the specified table in the specified schema. * Example:Using Pending Statistics Assume many modifications have been made to the employees table since the last time statistics were gathered. To ensure that the cost-based optimizer is still picking the best plan, statistics should be gathered once again; however, the user is concerned that new statistics will cause the optimizer to choose bad plans when the current ones are acceptable. The user can do the following:EXEC DBMS_STATS.SET_TABLE_PREFS('hr', 'employees', 'PUBLISH', 'false'); By setting the employees tables publish preference to FALSE, any statistics gather from now on will not be automatically published. The newly gathered statistics will be marked as pending. * SET_TABLE_PREFS Procedure

This procedure is used to set the statistics preferences of the specified table in the specified schema.

* Example:

Using Pending Statistics

Assume many modifications have been made to the employees table since the last time statistics were gathered. To ensure that the cost-based optimizer is still picking the best plan, statistics should be gathered once again; however, the user is concerned that new statistics will cause the optimizer to choose bad plans when the current ones are acceptable. The user can do the following:

EXEC DBMS_STATS.SET_TABLE_PREFS('hr', 'employees', 'PUBLISH', 'false');

By setting the employees tables publish preference to FALSE, any statistics gather from now on will not be automatically published. The newly gathered statistics will be marked as pending.

Question 9

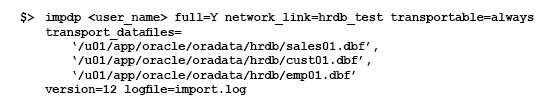

Examine the following impdp command to import a database over the network from a pre-12c Oracle database (source):

Which three are prerequisites for successful execution of the command? (Choose three.)

- The import operation must be performed by a user on the target database with the DATAPUMP_IMP_FULL_DATABASE role, and the database link must connect to a user on the source database with the DATAPUMP_EXP_FULL_DATABASE role.

- All the user-defined tablespaces must be in read-only mode on the source database.

- The export dump file must be created before starting the import on the target database.

- The source and target database must be running on the same platform with the same endianness.

- The path of data files on the target database must be the same as that on the source database.

- The impdp operation must be performed by the same user that performed the expdp operation.

Correct answer: ABD

Explanation:

In this case we have run the impdp without performing any conversion if endian format is different then we have to first perform conversion. In this case we have run the impdp without performing any conversion if endian format is different then we have to first perform conversion.

Question 10

Which two are true concerning a multitenant container database with three pluggable database?

- All administration tasks must be done to a specific pluggable database.

- The pluggable databases increase patching time.

- The pluggable databases reduce administration effort.

- The pluggable databases are patched together.

- Pluggable databases are only used for database consolidation.

Correct answer: CE

Explanation:

The benefits of Oracle Multitenant are brought by implementing a pure deployment choice. The following list calls out the most compelling examples. * High consolidation density. (E) The many pluggable databases in a single multitenant container database share its memory and background processes, letting you operate many more pluggable databases on a particular platform than you can single databases that use the old architecture. This is the same benefit that schema-based consolidation brings. * Rapid provisioning and cloning using SQL. * New paradigms for rapid patching and upgrades. (D, not B) The investment of time and effort to patch one multitenant container database results in patching all of its many pluggable databases. To patch a single pluggable database, you simply unplug/plug to a multitenant container database at a different Oracle Database software version. * (C, not A) Manage many databases as one. By consolidating existing databases as pluggable databases, administrators can manage many databases as one. For example, tasks like backup and disaster recovery are performed at the multitenant container database level. * Dynamic between pluggable database resource management. In Oracle Database 12c, Resource Manager is extended with specific functionality to control the competition for resources between the pluggable databases within a multitenant container database. Note:* Oracle Multitenant is a new option for Oracle Database 12c Enterprise Edition that helps customers reduce IT costs by simplifying consolidation, provisioning, upgrades, and more. It is supported by a new architecture that allows a multitenant container database to hold many pluggable databases. And it fully complements other options, including Oracle Real Application Clusters and Oracle Active Data Guard. An existing database can be simply adopted, with no change, as a pluggable database; and no changes are needed in the other tiers of the application. The benefits of Oracle Multitenant are brought by implementing a pure deployment choice. The following list calls out the most compelling examples.

* High consolidation density. (E)

The many pluggable databases in a single multitenant container database share its memory and background processes, letting you operate many more pluggable databases on a particular platform than you can single databases that use the old architecture. This is the same benefit that schema-based consolidation brings.

* Rapid provisioning and cloning using SQL.

* New paradigms for rapid patching and upgrades. (D, not B)

The investment of time and effort to patch one multitenant container database results in patching all of its many pluggable databases. To patch a single pluggable database, you simply unplug/plug to a multitenant container database at a different Oracle Database software version.

* (C, not A) Manage many databases as one.

By consolidating existing databases as pluggable databases, administrators can manage many databases as one. For example, tasks like backup and disaster recovery are performed at the multitenant container database level.

* Dynamic between pluggable database resource management. In Oracle Database 12c, Resource Manager is extended with specific functionality to control the competition for resources between the pluggable databases within a multitenant container database.

Note:

* Oracle Multitenant is a new option for Oracle Database 12c Enterprise Edition that helps customers reduce IT costs by simplifying consolidation, provisioning, upgrades, and more. It is supported by a new architecture that allows a multitenant container database to hold many pluggable databases. And it fully complements other options, including Oracle Real Application Clusters and Oracle Active Data Guard. An existing database can be simply adopted, with no change, as a pluggable database; and no changes are needed in the other tiers of the application.