File Info

| Exam | Oracle Big Data 2016 Implementation Essentials |

| Number | 1z0-449 |

| File Name | Oracle.1z0-449.CertDumps.2017-12-11.72q.vcex |

| Size | 1 MB |

| Posted | Dec 11, 2017 |

| Download | Oracle.1z0-449.CertDumps.2017-12-11.72q.vcex |

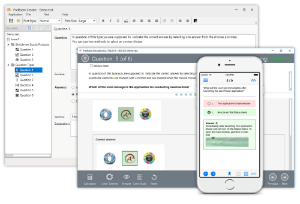

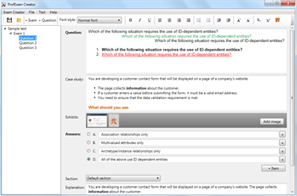

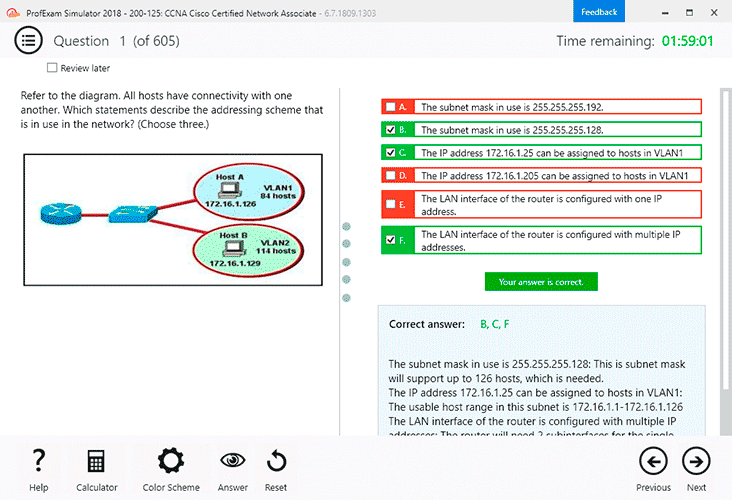

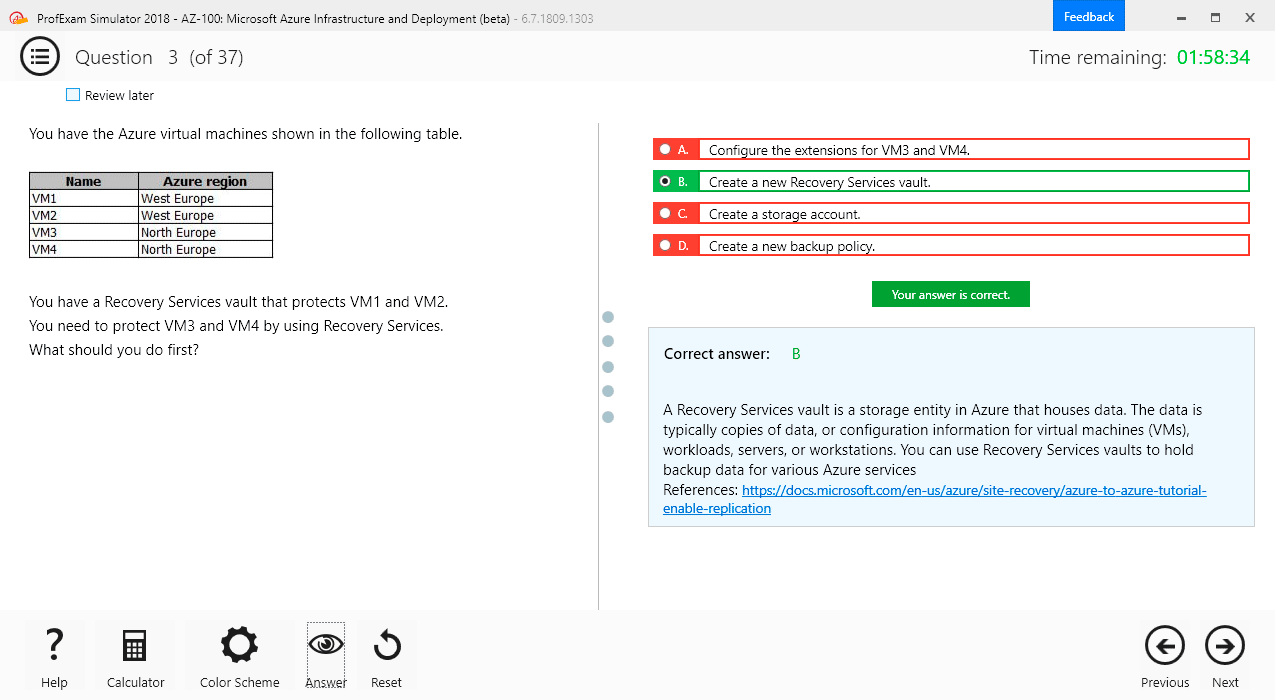

How to open VCEX & EXAM Files?

Files with VCEX & EXAM extensions can be opened by ProfExam Simulator.

Coupon: MASTEREXAM

With discount: 20%

Demo Questions

Question 1

You need to place the results of a PigLatin script into an HDFS output directory.

What is the correct syntax in Apache Pig?

- update hdfs set D as ‘./output’;

- store D into ‘./output’;

- place D into ‘./output’;

- write D as ‘./output’;

- hdfsstore D into ‘./output’;

Correct answer: B

Explanation:

Use the STORE operator to run (execute) Pig Latin statements and save (persist) results to the file system. Use STORE for production scripts and batch mode processing. Syntax: STORE alias INTO 'directory' [USING function];Example: In this example data is stored using PigStorage and the asterisk character (*) as the field delimiter.A = LOAD 'data' AS (a1:int,a2:int,a3:int);DUMP A; (1,2,3) (4,2,1) (8,3,4) (4,3,3) (7,2,5) (8,4,3) STORE A INTO 'myoutput' USING PigStorage ('*'); CAT myoutput; 1*2*3 4*2*1 8*3*4 4*3*3 7*2*5 8*4*3 References: https://pig.apache.org/docs/r0.13.0/basic.html#store Use the STORE operator to run (execute) Pig Latin statements and save (persist) results to the file system. Use STORE for production scripts and batch mode processing.

Syntax: STORE alias INTO 'directory' [USING function];

Example: In this example data is stored using PigStorage and the asterisk character (*) as the field delimiter.

A = LOAD 'data' AS (a1:int,a2:int,a3:int);

DUMP A;

(1,2,3)

(4,2,1)

(8,3,4)

(4,3,3)

(7,2,5)

(8,4,3)

STORE A INTO 'myoutput' USING PigStorage ('*');

CAT myoutput;

1*2*3

4*2*1

8*3*4

4*3*3

7*2*5

8*4*3

References: https://pig.apache.org/docs/r0.13.0/basic.html#store

Question 2

How is Oracle Loader for Hadoop (OLH) better than Apache Sqoop?

- OLH performs a great deal of preprocessing of the data on Hadoop before loading it into the database.

- OLH performs a great deal of preprocessing of the data on the Oracle database before loading it into NoSQL.

- OLH does not use MapReduce to process any of the data, thereby increasing performance.

- OLH performs a great deal of preprocessing of the data on the Oracle database before loading it into Hadoop.

- OLH is fully supported on the Big Data Appliance. Apache Sqoop is not supported on the Big Data Appliance.

Correct answer: A

Explanation:

Oracle Loader for Hadoop provides an efficient and high-performance loader for fast movement of data from a Hadoop cluster into a table in an Oracle database. Oracle Loader for Hadoop prepartitions the data if necessary and transforms it into a database-ready format. It optionally sorts records by primary key or user-defined columns before loading the data or creating output files. Note: Apache Sqoop(TM) is a tool designed for efficiently transferring bulk data between Apache Hadoop and structured datastores such as relational databases.Incorrect Answers:A, D: Oracle Loader for Hadoop provides an efficient and high-performance loader for fast movement of data from a Hadoop cluster into a table in an Oracle database.C: Oracle Loader for Hadoop is a MapReduce application that is invoked as a command-line utility. It accepts the generic command-line options that are supported by the org.apache.hadoop.util.Tool interface.E: The Oracle Linux operating system and Cloudera's Distribution including Apache Hadoop (CDH) underlie all other software components installed on Oracle Big Data Appliance. CDH includes Apache projects for MapReduce and HDFS, such as Hive, Pig, Oozie, ZooKeeper, HBase, Sqoop, and Spark.References: https://docs.oracle.com/cd/E37231_01/doc.20/e36961/start.htm#BDCUG326https://docs.oracle.com/cd/E55905_01/doc.40/e55814/concepts.htm#BIGUG117 Oracle Loader for Hadoop provides an efficient and high-performance loader for fast movement of data from a Hadoop cluster into a table in an Oracle database. Oracle Loader for Hadoop prepartitions the data if necessary and transforms it into a database-ready format. It optionally sorts records by primary key or user-defined columns before loading the data or creating output files.

Note: Apache Sqoop(TM) is a tool designed for efficiently transferring bulk data between Apache Hadoop and structured datastores such as relational databases.

Incorrect Answers:

A, D: Oracle Loader for Hadoop provides an efficient and high-performance loader for fast movement of data from a Hadoop cluster into a table in an Oracle database.

C: Oracle Loader for Hadoop is a MapReduce application that is invoked as a command-line utility. It accepts the generic command-line options that are supported by the org.apache.hadoop.util.Tool interface.

E: The Oracle Linux operating system and Cloudera's Distribution including Apache Hadoop (CDH) underlie all other software components installed on Oracle Big Data Appliance. CDH includes Apache projects for MapReduce and HDFS, such as Hive, Pig, Oozie, ZooKeeper, HBase, Sqoop, and Spark.

References:

https://docs.oracle.com/cd/E37231_01/doc.20/e36961/start.htm#BDCUG326

https://docs.oracle.com/cd/E55905_01/doc.40/e55814/concepts.htm#BIGUG117

Question 3

Which three pieces of hardware are present on each node of the Big Data Appliance? (Choose three.)

- high capacity SAS disks

- memory

- redundant Power Delivery Units

- InfiniBand ports

- InfiniBand leaf switches

Correct answer: ABD

Explanation:

Big Data Appliance Hardware Specification and Details, example:Per Node:2 x Eight-Core Intel ® Xeon ® E5-2260 Processors (2.2 GHz) 64 GB Memory (expandable to 256GB) Disk Controller HBA with 512MB Battery backed write cache 12 x 3TB 7,200 RPM High Capacity SAS Disks 2 x QDR (Quad Data Rate InfiniBand)(40Gb/s) Ports 4 x 10 Gb Ethernet Ports 1 x ILOM Ethernet Port References: http://www.oracle.com/technetwork/server-storage/engineered-systems/bigdata-appliance/overview/bigdataappliancev2-datasheet-1871638.pdf Big Data Appliance Hardware Specification and Details, example:

Per Node:

- 2 x Eight-Core Intel ® Xeon ® E5-2260 Processors (2.2 GHz)

- 64 GB Memory (expandable to 256GB)

- Disk Controller HBA with 512MB Battery backed write cache

- 12 x 3TB 7,200 RPM High Capacity SAS Disks

- 2 x QDR (Quad Data Rate InfiniBand)(40Gb/s) Ports

- 4 x 10 Gb Ethernet Ports

- 1 x ILOM Ethernet Port

References: http://www.oracle.com/technetwork/server-storage/engineered-systems/bigdata-appliance/overview/bigdataappliancev2-datasheet-1871638.pdf

Question 4

What two actions do the following commands perform in the Oracle R Advanced Analytics for Hadoop Connector? (Choose two.)

ore.connect (type=”HIVE”)

ore.attach ()

- Connect to Hive.

- Attach the Hadoop libraries to R.

- Attach the current environment to the search path of R.

- Connect to NoSQL via Hive.

Correct answer: AC

Explanation:

You can connect to Hive and manage objects using R functions that have an ore prefix, such as ore.connect. To attach the current environment into search path of R use:ore.attach() References: https://docs.oracle.com/cd/E49465_01/doc.23/e49333/orch.htm#BDCUG400 You can connect to Hive and manage objects using R functions that have an ore prefix, such as ore.connect.

To attach the current environment into search path of R use:

ore.attach()

References: https://docs.oracle.com/cd/E49465_01/doc.23/e49333/orch.htm#BDCUG400

Question 5

Your customer’s security team needs to understand how the Oracle Loader for Hadoop Connector writes data to the Oracle database.

Which service performs the actual writing?

- OLH agent

- reduce tasks

- write tasks

- map tasks

- NameNode

Correct answer: B

Explanation:

Oracle Loader for Hadoop has online and offline load options. In the online load option, the data is both preprocessed and loaded into the database as part of the Oracle Loader for Hadoop job. Each reduce task makes a connection to Oracle Database, loading into the database in parallel. The database has to be available during the execution of Oracle Loader for Hadoop. References: http://www.oracle.com/technetwork/bdc/hadoop-loader/connectors-hdfs-wp-1674035.pdf Oracle Loader for Hadoop has online and offline load options. In the online load option, the data is both preprocessed and loaded into the database as part of the Oracle Loader for Hadoop job. Each reduce task makes a connection to Oracle Database, loading into the database in parallel. The database has to be available during the execution of Oracle Loader for Hadoop.

References: http://www.oracle.com/technetwork/bdc/hadoop-loader/connectors-hdfs-wp-1674035.pdf

Question 6

Your customer needs to manage configuration information on the Big Data Appliance.

Which service would you choose?

- SparkPlug

- ApacheManager

- Zookeeper

- Hive Server

- JobMonitor

Correct answer: C

Explanation:

The ZooKeeper utility provides configuration and state management and distributed coordination services to Dgraph nodes of the Big Data Discovery cluster. It ensures high availability of the query processing by the Dgraph nodes in the cluster. References: https://docs.oracle.com/cd/E57471_01/bigData.100/admin_bdd/src/cadm_cluster_zookeeper.html The ZooKeeper utility provides configuration and state management and distributed coordination services to Dgraph nodes of the Big Data Discovery cluster. It ensures high availability of the query processing by the Dgraph nodes in the cluster.

References: https://docs.oracle.com/cd/E57471_01/bigData.100/admin_bdd/src/cadm_cluster_zookeeper.html

Question 7

You are helping your customer troubleshoot the use of the Oracle Loader for Hadoop Connector in online mode. You have performed steps 1, 2, 4, and 5.

STEP 1: Connect to the Oracle database and create a target table.

STEP 2: Log in to the Hadoop cluster (or client).

STEP 3: Missing step

STEP 4: Create a shell script to run the OLH job.

STEP 5: Run the OLH job.

What step is missing between step 2 and step 4?

- Diagnose the job failure and correct the error.

- Copy the table metadata to the Hadoop system.

- Create an XML configuration file.

- Query the table to check the data.

- Create an OLH metadata file.

Correct answer: C

Question 8

The hdfs_stream script is used by the Oracle SQL Connector for HDFS to perform a specific task to access data.

What is the purpose of this script?

- It is the preprocessor script for the Impala table.

- It is the preprocessor script for the HDFS external table.

- It is the streaming script that creates a database directory.

- It is the preprocessor script for the Oracle partitioned table.

- It defines the jar file that points to the directory where Hive is installed.

Correct answer: B

Explanation:

The hdfs_stream script is the preprocessor for the Oracle Database external table created by Oracle SQL Connector for HDFS. References: https://docs.oracle.com/cd/E37231_01/doc.20/e36961/start.htm#BDCUG107 The hdfs_stream script is the preprocessor for the Oracle Database external table created by Oracle SQL Connector for HDFS.

References: https://docs.oracle.com/cd/E37231_01/doc.20/e36961/start.htm#BDCUG107

Question 9

How should you encrypt the Hadoop data that sits on disk?

- Enable Transparent Data Encryption by using the Mammoth utility.

- Enable HDFS Transparent Encryption by using bdacli on a Kerberos-secured cluster.

- Enable HDFS Transparent Encryption on a non-Kerberos secured cluster.

- Enable Audit Vault and Database Firewall for Hadoop by using the Mammoth utility.

Correct answer: B

Explanation:

HDFS Transparent Encryption protects Hadoop data that’s at rest on disk. When the encryption is enabled for a cluster, data write and read operations on encrypted zones (HDFS directories) on the disk are automatically encrypted and decrypted. This process is “transparent” because it’s invisible to the application working with the data. The cluster where you want to use HDFS Transparent Encryption must have Kerberos enabled. Incorrect Answers:D: The cluster where you want to use HDFS Transparent Encryption must have Kerberos enabled.References: https://docs.oracle.com/en/cloud/paas/big-data-cloud/csbdi/using-hdfs-transparent-encryption.html#GUID-16649C5A-2C88-4E75-809A-BBF8DE250EA3 HDFS Transparent Encryption protects Hadoop data that’s at rest on disk. When the encryption is enabled for a cluster, data write and read operations on encrypted zones (HDFS directories) on the disk are automatically encrypted and decrypted. This process is “transparent” because it’s invisible to the application working with the data.

The cluster where you want to use HDFS Transparent Encryption must have Kerberos enabled.

Incorrect Answers:

D: The cluster where you want to use HDFS Transparent Encryption must have Kerberos enabled.

References: https://docs.oracle.com/en/cloud/paas/big-data-cloud/csbdi/using-hdfs-transparent-encryption.html#GUID-16649C5A-2C88-4E75-809A-BBF8DE250EA3

Question 10

What two things does the Big Data SQL push down to the storage cell on the Big Data Appliance? (Choose two.)

- Transparent Data Encrypted data

- the column selection of data from individual Hadoop nodes

- WHERE clause evaluations

- PL/SQL evaluation

- Business Intelligence queries from connected Exalytics servers

Correct answer: AB